Discovering a dependency confusion in a popular third-party Python dependency resolver

A peculiar case of dependency confusion potentially affecting 136M monthly package downloads

How it all started

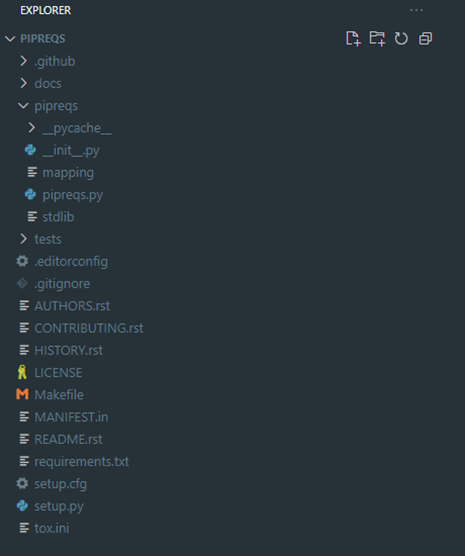

However, many developers favored pipreqs (1,2,3,4).

The tool is quite popular, has gathered over 5 thousand stars on GitHub, and has a monthly download rate of 1.5 million, according to PyPI stats.

Why is it so?

pipreqs vs pip freeze

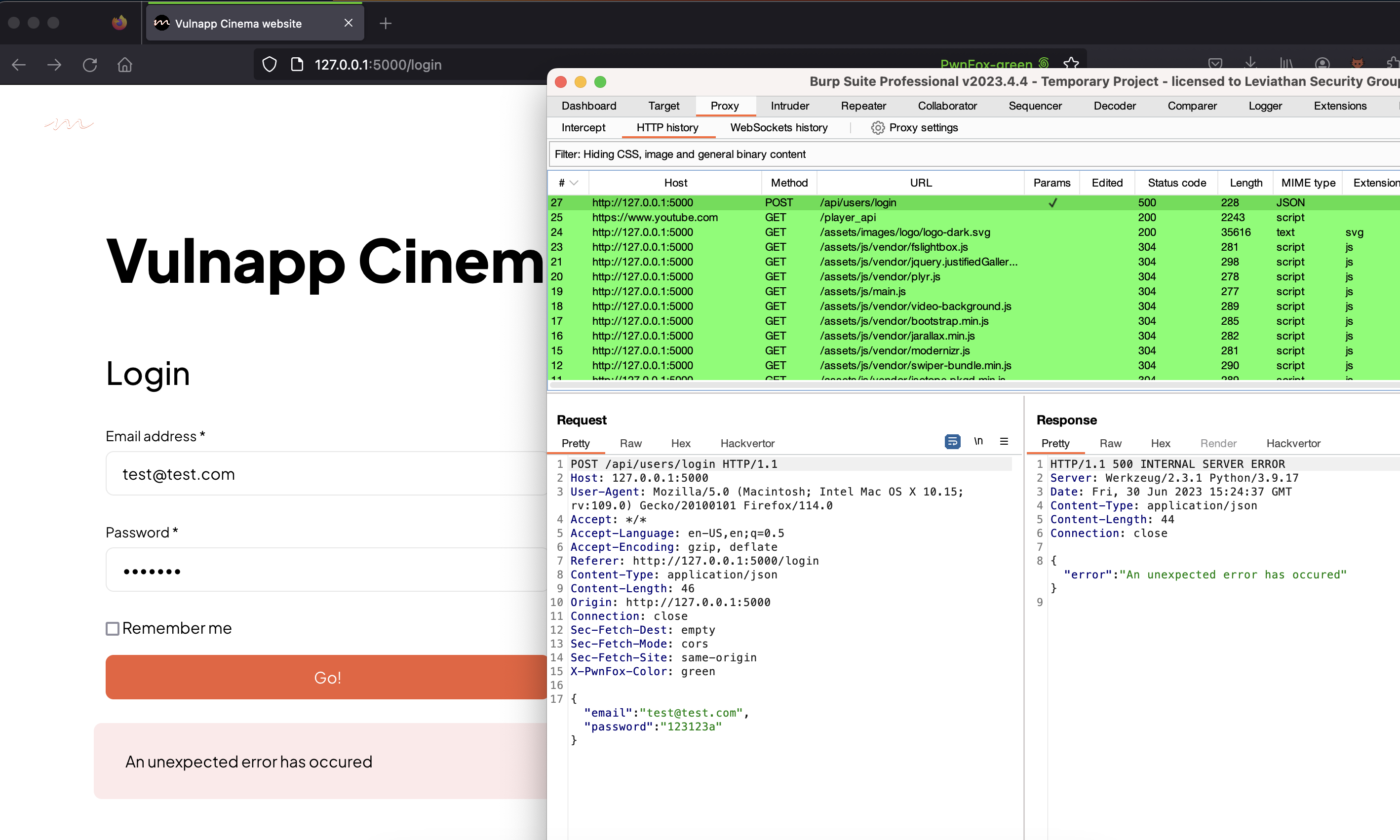

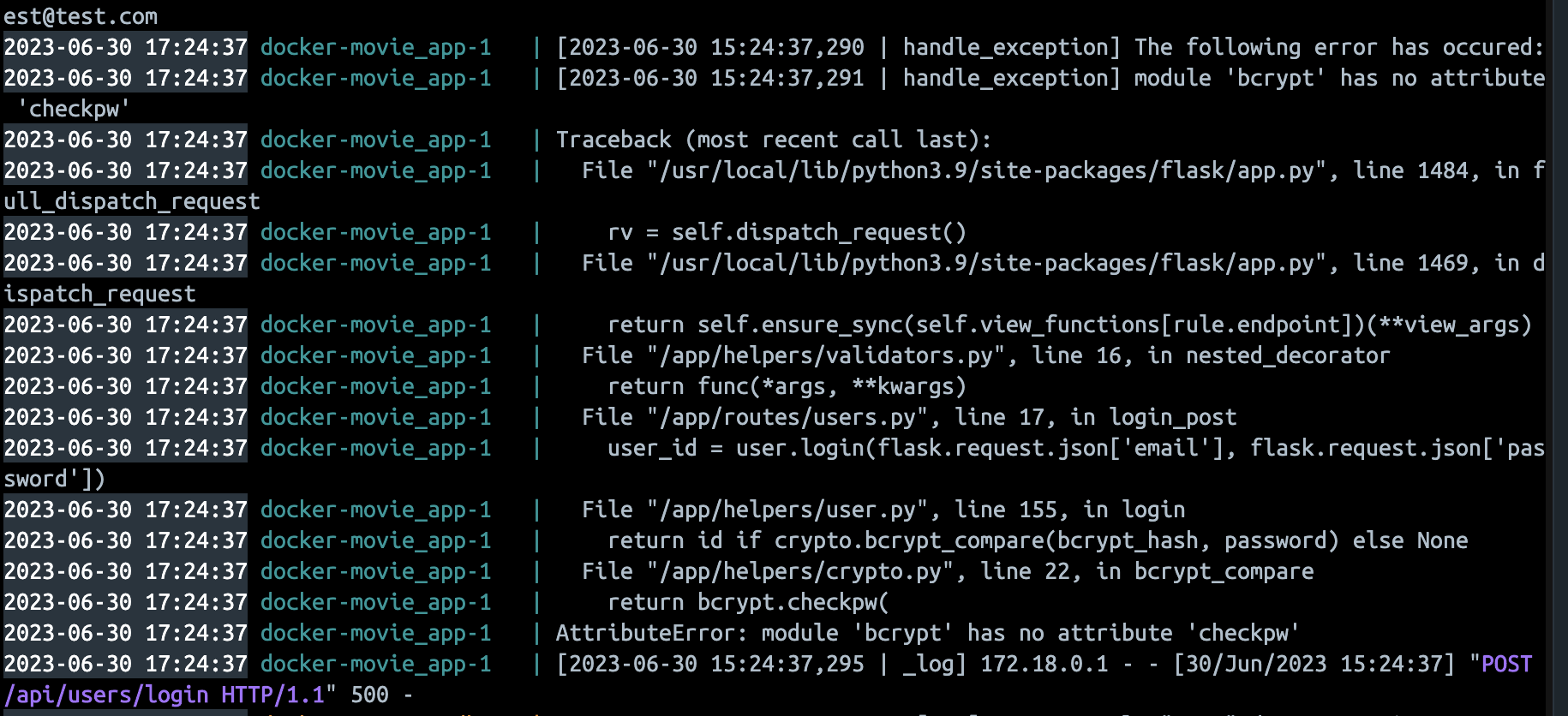

However, when I launched my newly created Docker container and tried to log into the website, I was greeted by an unforgiving HTTP error 500 message. Logs showed that it was related to a bcrypt module missing an exported method.

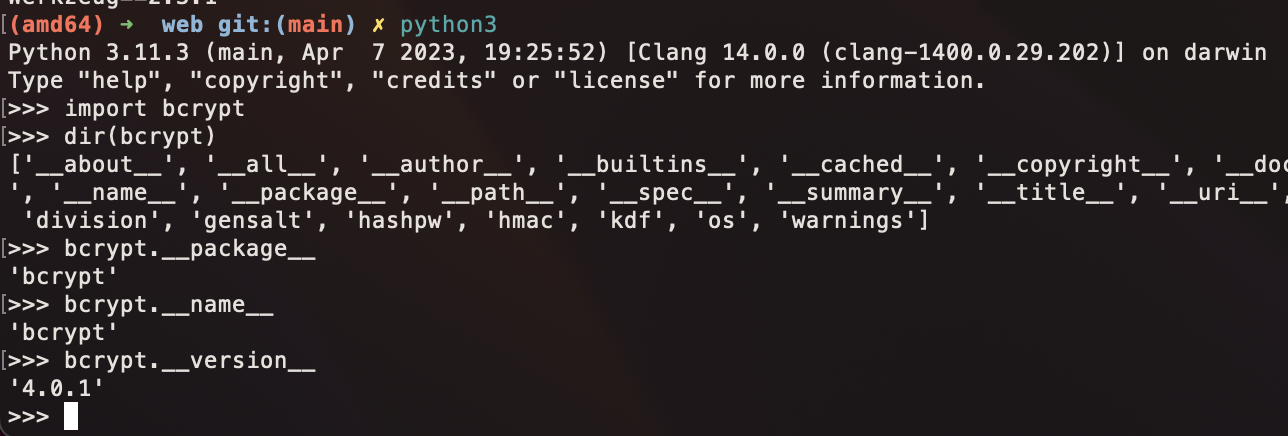

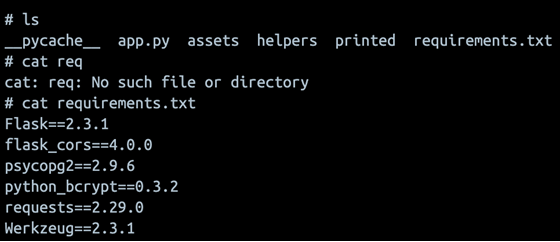

Bcrypt on my laptop:

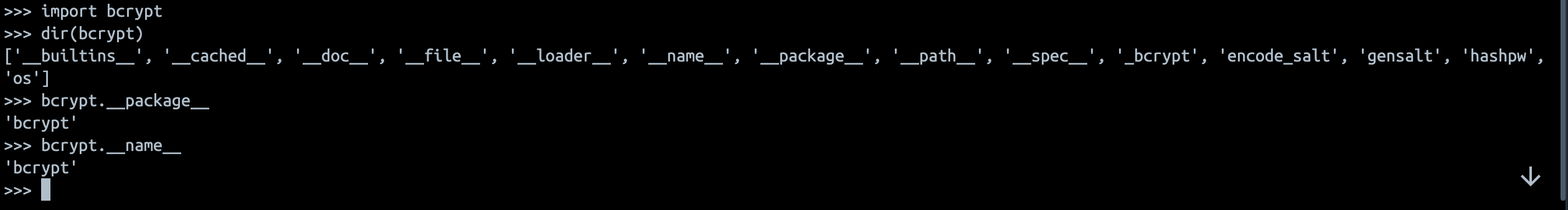

Bcrypt inside Docker container:

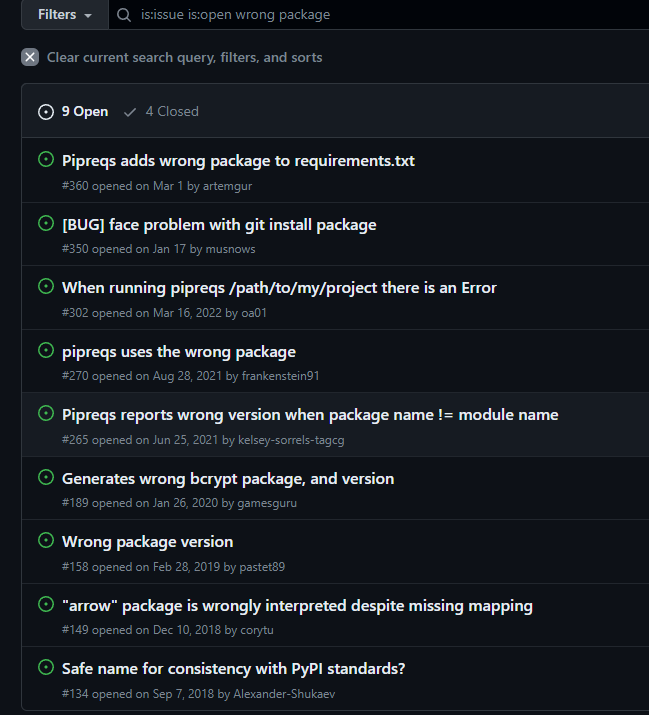

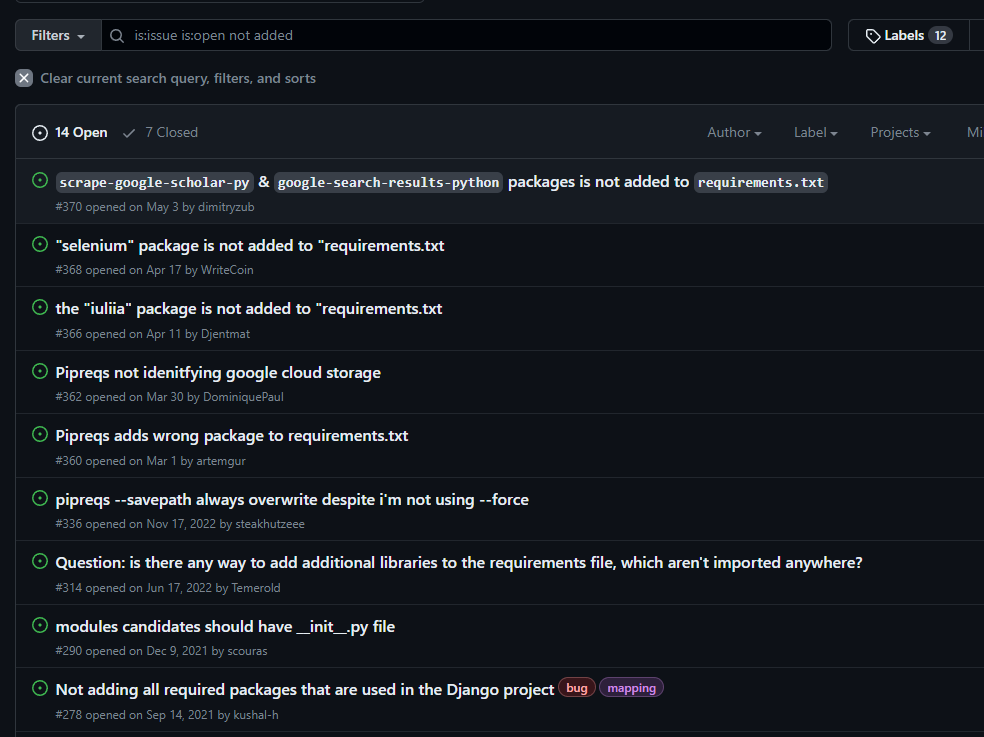

This looked worthy of further investigation, so I decided to search through similar issues on the project’s GitHub page. Sure enough, there were plenty of open tickets that boiled down to the following two problems:

- Imported module was resolved to the wrong PYPI package

- Imported module failed to resolve at all

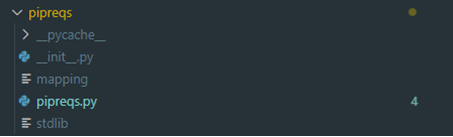

Analyzing the source code

Flawed name resolution

That is the root cause of my bcrypt bug!

It gets way more interesting at line 261, where the script assumes the full import name to be the package name as a fallback.

Why does it matter? Well, keep in mind that the script then tries to resolve the obtained package names into PyPI packages.

This is done simply by querying the package name at the PyPI server:

This behavior is the reason for a bunch of tickets (e.g., 1, 2, 3) on GitHub.

But what if the vulnerable package is installed locally?

So far, we can inject unwanted dependencies if the bad package name resolves at PyPI. However, it seems that pipreqs initially tries to find it in local packages. Is this check sufficient to prevent abuse of the name resolution?

Well… no. There are also quite a few issues where imports are mapped to multiple packages, such as this one.

Why?

Consider the following example:

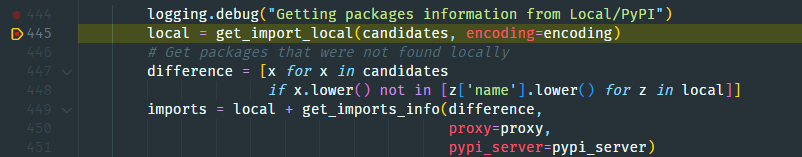

Let’s run and view the pipreqs output in the debugger step by step:

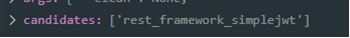

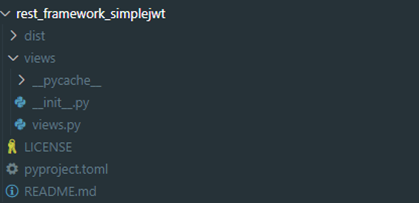

- Pipreqs extracts imported rest_framework_simplejwt module from the code:

- Pipreqs tries to match the rest_framework_simplejwt module with strings in the mapping file, but the package is missing. So, the script still assumes the package name to be rest_framework_simplejwt

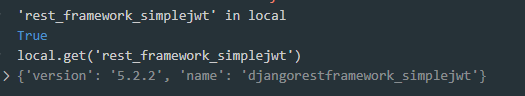

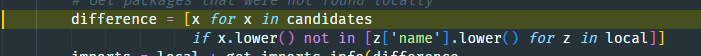

- The script tries to find the rest_framework_simplejwt module in the exports of all locally installed Python packages…and finds it!

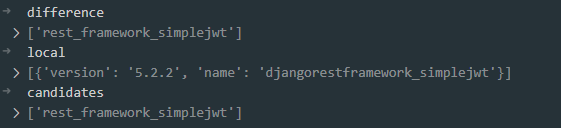

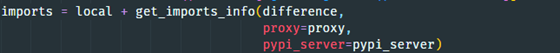

- The import names are then compared to local packages. However! Pipreqs tests again if the name of the exported module (see step 2) is similar to the one of the PyPI package.

- And so, the flawed PyPI package resolve is triggered.

How to check if a package is vulnerable?

What’s the impact?

Suppose we can meet all three conditions above and register a rogue PyPI package that matches the imported Python modules of a vulnerable package the targeted users depend on. In that case, pipreqs will add it to the requirements.txt, enabling us to achieve RCE on users’ systems!

But more importantly, how many PyPI packages with mismatched names are there?

Analyzing the PyPI attack surface

What are our actions?

- Get the top 5000 downloaded packages from PyPI this month (March 2023).

- Download these packages locally and obtain their exported Python modules (Yikes. At least we don’t need to install them.)

- Search for vulnerable packages that meet the aforementioned criteria:

1. Python module name ≠ PyPI package name

2. Python module name not in the pipreqs mapping file

3. Python module name available at PyPI as a package name

- Attempt to exploit one package as an example

- ???

- Profit!

PyPI provides a neat data set of package-related information through GCloud BigData API.

We can easily automate querying it with the help of the open-source CLI tool pypinfo.

Running pypinfo to get the top 5000 projects by monthly downloads in March 2023 is as simple as:

We are left with the following JSON file:

The JSON file was processed to obtain all PyPI packages that can be misinterpreted by pipreqs. Next, the results were manually reviewed to filter out false positives.

Time for some quick statistics. Out of 5000 top downloaded packages, we found 149 to be vulnerable (3% out of the total count). The monthly count for the vulnerable PyPI packages totaled 136,433,596 individual downloads.

Proof of Concept

Creating a working exploit is trivial. All we need to do is to properly package our code as described in the official PyPI docs. Our malicious package will mimic the directory structure of the original package:

views.py will contain two dummy classes to provide the import names:

__init__.py will provide the main functionality that will contain our malicious code. For the sake of demonstration, our RCE will only print out a warning message:

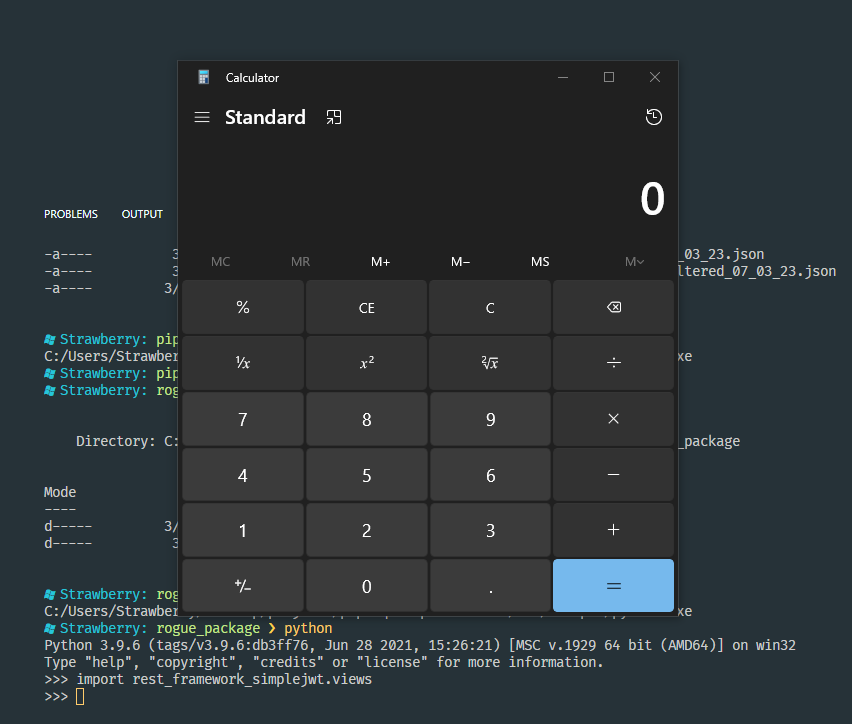

This is what happens if we import the malicious package:

Obviously, we can do whatever we want with the system at this point. For example, pop a classic calc.exe process:

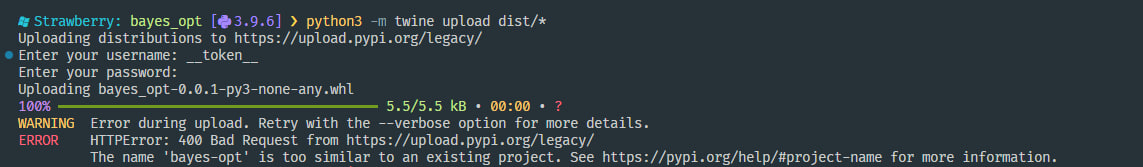

All that’s left is to appropriately fill out all metadata files, build the package, and publish it to PyPI:

Building the package:

Publishing the package:

The question now is, will we be able to replicate the exploit in the wild? Yes!

Is it that bad?

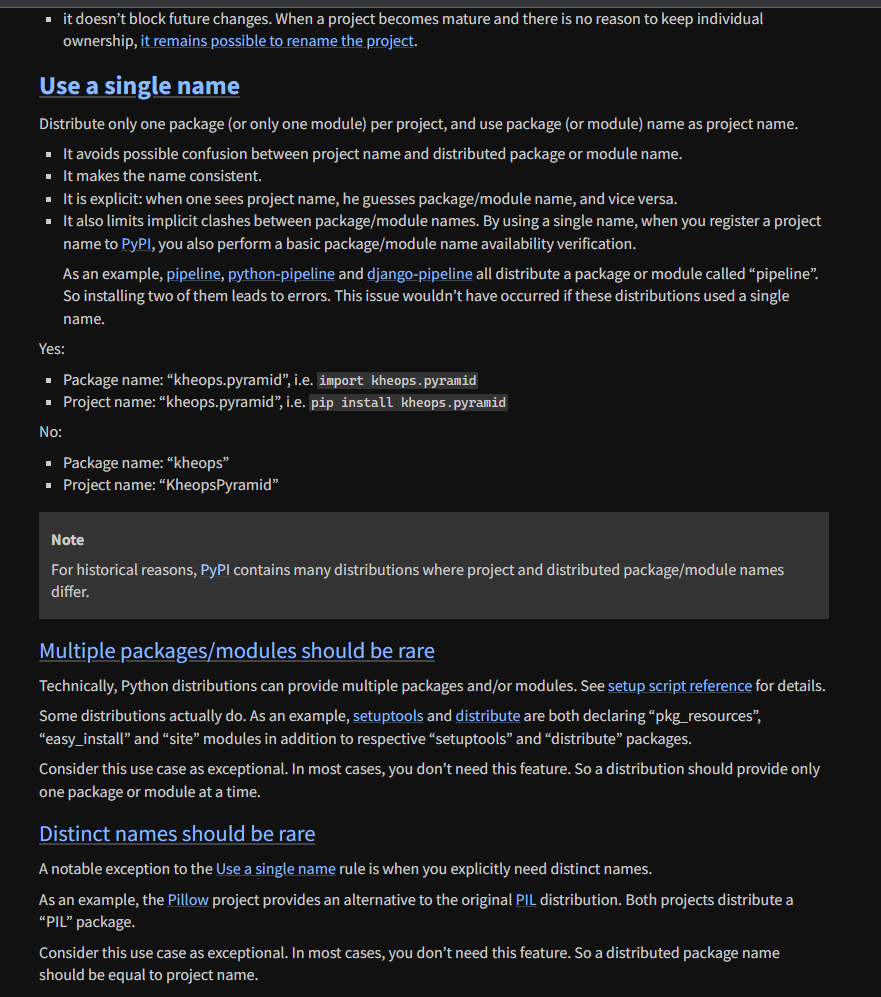

The naming-related confusions are a long-standing problem in the Python packaging system. There are numerous proposals to fix it, including PEP 423. Although some devs try to follow these vague guidelines, others do not.

However, during the PoC development, I noticed that I could not upload some legitimately vulnerable packages to PyPI due to a “too similar name.”

Thankfully, PyPI has been trying to do damage control over naming confusion for quite some time now (e.g., 1, 2, 3).

These are the additional rules placed by PyPI on the package name for it to be exploited in public:

Although it sometimes manages to prevent the attack, there are still many exposed packages in the wild, as we have already shown in the PoC.

What can be done about this?

Although we can’t really fix the root cause of the bug (the remote dependency resolution mechanism), it is possible to build upon the existing local resolution code to avoid having to use remote PyPI resolution in the first place.

A pull request with fixes to the code includes these changes:

The new pipreqs version’s output for the same code above:

Additionally, I added a warning message into the CLI output that is displayed when pipreqs is run with remote resolution enabled. The message encourages users to check the list of the final requirements:

Aftermath

April 14th, 2023: The pipreqs creator, bndr (thank you!), was quick to respond and merge a bunch of commits, including mine, into the main branch and release pipreqs 0.4.13.

May 16th, 2023: The vulnerability was triaged by MITRE CVE team and assigned ID CVE-2023-31543

July 10th, 2023: CVE disclosure

News & Updates...

We are happy to share our methodology and security guide on how to do security reviews for Ruby on Rails applications through source code. In the article you will get an idea about the architecture and design of Ruby on Rails, present security checklist to increase the coverage for penetration testing assessments, and review how to find and exploit most of the OWASP 10 vulnerabilities.

XSS can be particularly devastating to Electron apps, and can result in RCE and phishing that might not be viable in a browser. Electron has features to mitigate these problems, so applications should turn them on. Even XSS that would be low-impact in the browser can result in highly effective phishing if the application’s URL allowlist is improperly designed. Attacks exploit the Electron model and the application-like presentation of Electron to gain the user’s confidence.